Data Center Network Infrastructure: Leaf–Spine, EVPN-VXLAN, and Real-World Design Practices

- Data Center Network Infrastructure in Data Centers

- Scope: What Network Infrastructure Actually Includes

- Fabric Baseline: Leaf–Spine with EVPN-VXLAN

- Physical Layer Choices

- Optics Heat and Fixture Profiles

- Security Segmentation and Visibility

- Operations and Labeling

- Deployment Blueprint

- Procurement and Lifecycle Planning

- FAQ

Key Takeaways

| Feature or Topic | Summary |

|---|---|

| Leaf–Spine Fabrics | Flat latency, horizontal scale, predictable failure domains. |

| Physical Layer | OM4/OS2 fiber, DAC/AOC policy, cooling-aware pathways. |

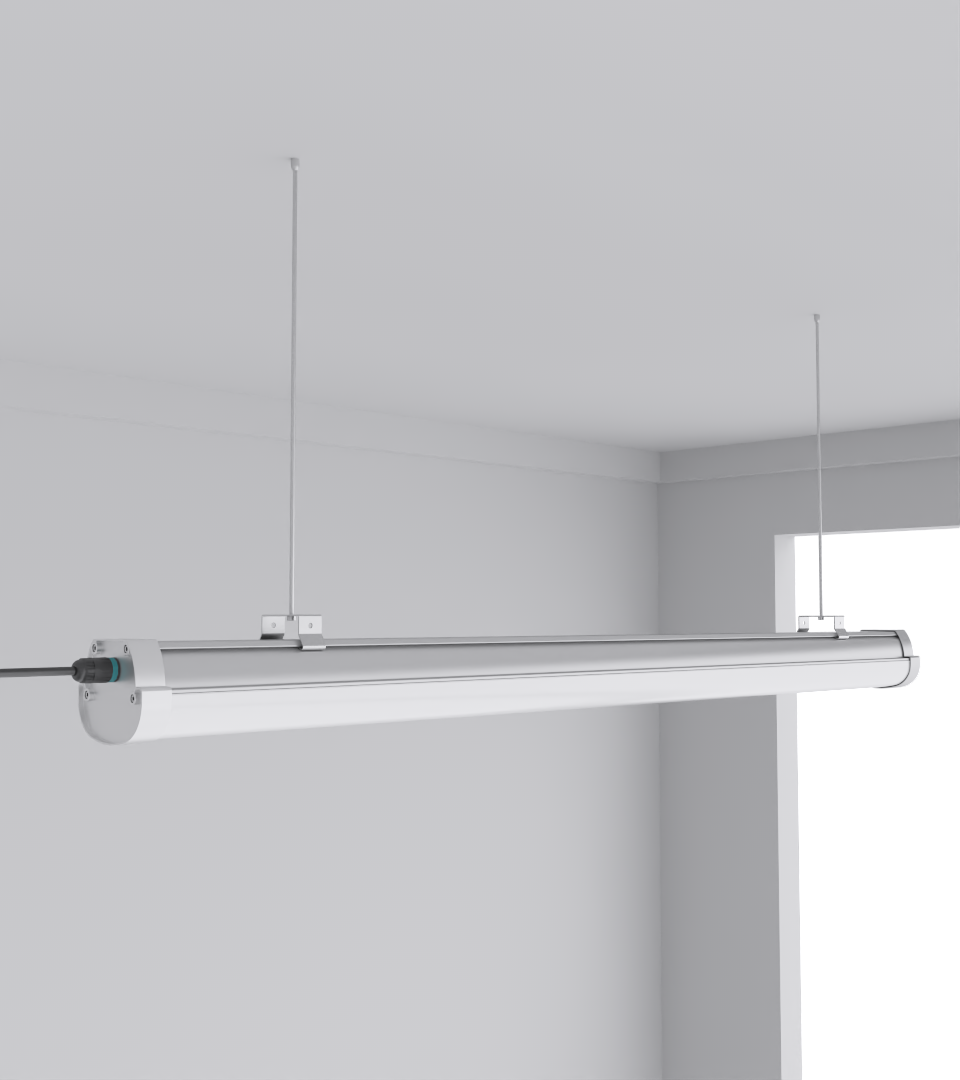

| Lighting Impact | Neutral, low-glare fixtures like Squarebeam Elite improve visibility and reduce errors. |

| Ops and Procurement | Labeling under neutral lighting, spares strategy, BOM simplicity, and lifecycle review. |

Modern data center networks aren’t just a diagram with neat arrows. They’re a knot of tradeoffs: optics budgets, cable pathway pressure, airflow, power domains, and the very human problem of someone needing to read a label at 2 a.m. without glare. At a high level, we tie compute islands together with predictable latency, isolate east-west traffic, and remove single points that quietly become the office villain later. At the floor level, we keep trays passable, patch fields visible, and lighting out of the way of tray bends.

1) Scope: What Network Infrastructure Actually Includes (and What It Touches)

When teams say “network,” they often mean switches and routers. Real operations pull in more: structured cabling, trays and ladders, optics, inter-row pathways, labeling schemes, and environmental elements that influence usability—like lighting and line of sight. I’ve watched good designs stumble because a patch field sat in a shadow, which sounds silly till you’re tracing a link with a headlamp and squinting.

2) Fabric Baseline: Leaf–Spine with EVPN-VXLAN, Plus the One Table You Actually Use

Leaf–spine wins because it scales horizontally and keeps hop-count flat. EVPN-VXLAN gives you scalable L2 domains without VLAN sprawl, and a real control plane for MAC/IP learning. Keep the underlay boring: IS-IS or eBGP with equal-cost multipath; don’t hide clever tricks in there. If you need to explain a route in a war room, “boring” is a gift.

| Element | Practical Choice | Why it survives 3 a.m. |

|---|---|---|

| Underlay | IS-IS or eBGP | Simple ECMP, vendor-neutral |

| Overlay | EVPN-VXLAN | Scalable L2, cleaner mobility |

| Failure policy | Fast reroute + ECMP | Small blast radius |

| Optics | 100/200/400G mix | Cost vs. reach balancing |

| Cabling | OM4/OS2, DAC for TOR | Keep install repeatable |

3) Physical Layer Choices: Fiber, Copper, and How Pathways Dictate the Rest

| Use case | Medium | Notes |

|---|---|---|

| TOR ↔ server | DAC | Cheap, short, tidy |

| Leaf ↔ spine (row) | AOC / OM4 SR | Manage tray congestion |

| Row ↔ row | OM4/OS2 | Plan pulls during low-risk windows |

| DCI | OS2 + 400ZR/ZR+ | Budget cooling in MPO cassettes |

4) Optics Heat, Aisle Cooling, and Why Fixture Profiles Matter

5) Security Segmentation and East-West Visibility (Plus Human Factors)

6) Operations: Labeling, Change Windows, and Light That Doesn’t Fight You

7) Deployment Blueprint: From Day-0 to Day-2 (with a Simple BOM Table)

| Category | Items |

|---|---|

| Fabric | Leaf switches (100/200/400G), Spine switches (400/800G), transceivers |

| Cabling | OM4/OS2 trunks, MPO cassettes, DAC/AOC jumpers |

| DCI | 400ZR/ZR+ modules, OS2 spares |

| Lighting | Squarebeam Elite, SeamLine Batten, Quattro Triproof Batten |

| Tools | Power meter, visual fault locator, label printer, FLIR cam |

8) Procurement, Samples, and Lifecycle Planning (So You Don’t Re-do This Next Year)

FAQ

- Q1: What’s the simplest starting topology for a mid-size build?

Leaf–spine with eBGP underlay, EVPN-VXLAN overlay, and ECMP. Keep SKUs limited to a couple of optics types and one fiber type if you can. - Q2: How do lighting choices actually affect network uptime?

Mostly through serviceability—clear labeling under neutral, low-glare light reduces mistakes during changes or incidents, which directly lowers MTTR. - Q3: Is 800G practical yet across the whole fabric?

Core/aggregation, yes; top-of-rack, usually not cost-effective. Mix 100/200/400G at the edges, scale 800G where aggregation paths justify it. - Q4: Fiber vs. DAC for leaf-server links?

If runs are short and density is high, DAC is tidy and cheap. If you need more flexibility or reach, AOC or OM4 makes sense. - Q5: Where should I place emergency fixtures in relation to racks?

Keep egress lines obvious from both cold and hot aisles. Validate with a power-off walk; aim for even illumination without glare on signage. - Q6: What internal resources should I read next?

Start with data center lighting best practices, then scan the lighting category index and the product lineup.