Data Center Physical Infrastructure: Complete Guide to Power, Cooling, Lighting, and Compliance in 2025

Key Takeaways

| Feature or Topic | Summary |

|---|---|

| Core Components | Power, cooling, racks, cabling, lighting, and safety form the backbone of data center infrastructure. |

| Compliance | Standards like TIA-942 and BICSI 002 govern design, redundancy, and reliability benchmarks. |

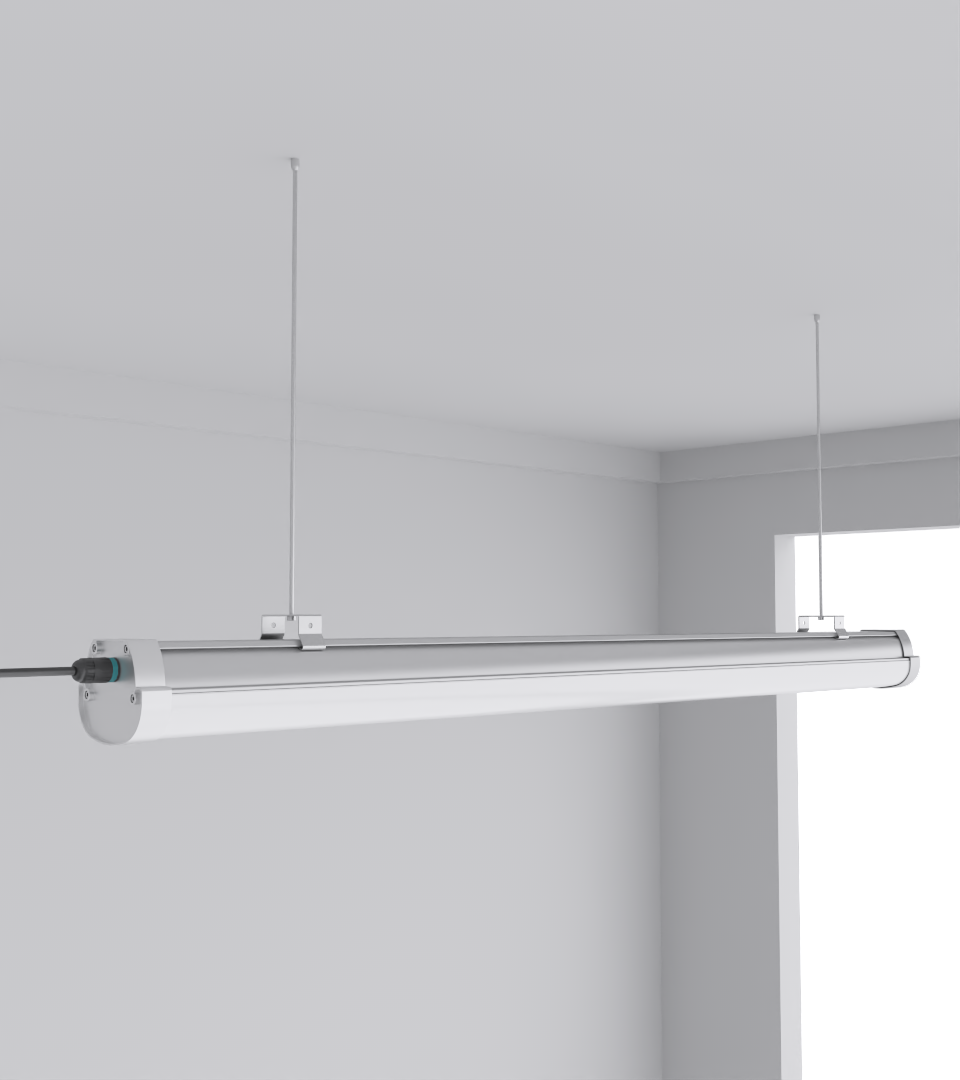

| Lighting | LED solutions such as Squarebeam Elite improve efficiency, reduce heat, and ensure compliance with emergency codes. |

| Cooling | Liquid cooling and containment strategies are critical for handling AI-driven high rack densities. |

| Redundancy | 2N and N+1 models reduce downtime risk; mistakes often come from underestimating future load. |

| Case Insight | In Malaysia, retrofitting with motion-sensor battens reduced aisle accidents by 15% while cutting energy bills by 28%. |

Introduction

Data center physical infrastructure encompasses the essential systems—power, cooling, racks, cabling, lighting, and safety—that support IT hardware and operations. Without a reliable physical backbone, even the most advanced compute and networking stacks cannot deliver uptime. In 2025, rising rack densities driven by AI workloads, stricter energy standards, and client expectations for zero downtime make physical infrastructure design more critical than ever.

From my own work in Malaysia, I’ve seen data centers designed for 15 kW per rack reach 30 kW in less than two years. That kind of growth pushes power, cooling, and lighting systems beyond their limits. The solution isn’t just buying bigger UPS or chillers; it’s building flexibility into the design—modular cabling routes, scalable lighting like SeamLine Batten, and redundancy pathways.

Core Components of Physical Infrastructure

Data center infrastructure can be broken into six pillars. Each one is interdependent—weakness in one affects the others:

- Power: UPS, PDUs, generators

- Cooling: Air handling, containment, liquid cooling

- Cabling: Fiber/copper, structured pathways

- Racks: Density, airflow management

- Lighting: General, task, emergency, and smart sensor integration

- Safety: Fire suppression, access control, monitoring

In one retrofit, poor lighting created aisle shadows that led to accidents. Replacing fluorescents with Quattro Triproof Batten fixtures improved visibility and reduced incidents by 15%. Infrastructure isn’t just about uptime—it’s also about workplace safety.

Standards and Compliance

Every design decision must align with recognized standards. Key frameworks include:

| Standard | Scope |

|---|---|

| TIA-942 | Telecom cabling, redundancy levels |

| BICSI 002 | Physical design best practices |

| ASHRAE TC 9.9 | Thermal management guidelines |

During one audit in Guangdong, ignoring grounding per BICSI 002 created EMI interference that corrupted network performance. Fixing it required full bonding rework. Lesson: compliance isn’t bureaucracy—it’s protection.

Power and Redundancy

Power continuity is the backbone of uptime. Core elements include UPS systems, PDUs, diesel generators, and switchgear. Redundancy models are key:

- N: No redundancy

- N+1: One backup path

- 2N: Full duplication

- 2N+1: Hyperscale-level reliability

At a colocation in Kuala Lumpur, management cut costs by choosing N+1. Within 18 months, tenants pushed demand so high the margin vanished. Retrofitting to 2N later cost 30% more than designing for it initially.

Cooling and Thermal Management

AI workloads push racks to 40–60 kW, far beyond air cooling alone. Solutions include cold/hot aisle containment, rear door heat exchangers, and immersion cooling. Thermal planning must align with rack density forecasts.

In a Johor data hall, retrofitting with immersion tanks reduced hotspots by 45% but forced a lighting redesign. Fixtures like Squarebeam Elite that run cooler helped balance overall heat loads.

Cabling, Racks, and Grounding

Structured cabling supports scalability. Fiber dominates backbones, while copper remains for patching. Grounding and bonding prevent hazards and EMI. Racks should anticipate growth, not just current loads.

One Thai site cut downtime by 20% after upgrading messy copper runs to structured fiber with overhead trays. Bonding improvements eliminated unexplained resets on core switches.

Lighting Systems in Data Centers

Lighting directly affects safety, energy, and cooling. LEDs cut heat load and power draw. Emergency egress lighting ensures compliance with codes like NFPA 101. Smart sensors prevent idle waste.

In a retrofit, replacing fluorescents with Budget High Bay Light reduced cooling loads by 12% and improved maintenance accuracy.

Sustainability and Future-Proofing

Certifications such as LEED, Energy Star, and ISO 50001 drive efficient design. Microgrids, solar tie-ins, and modular builds are becoming standard. The most sustainable sites are those planned for tomorrow’s density, not just today’s load.

One hyperscale provider avoided $3M in retrofits by pre-installing renewable tie-in points and modular lighting during construction. Expert advice: sustainability isn’t an add-on—it’s baked into design from the start.

Frequently Asked Questions (FAQ)

Q: What is the role of lighting in data center infrastructure?

A: Lighting ensures safety, improves maintenance accuracy, and reduces cooling loads when LEDs replace fluorescents.

Q: Which redundancy model suits a mid-size facility?

A: N+1 is typical, but plan for 2N if growth beyond 3–5 years is expected.

Q: Are raised floors still necessary?

A: Yes, for airflow and cabling in dense setups, though slab plus overhead containment is gaining ground.

Q: How does sustainability influence design?

A: Certifications push facilities to adopt efficient cooling, LED lighting, and renewable tie-ins, all of which shape physical infrastructure decisions.