Data Centre Facilities Explained (2025): Standards, Cooling, Power, and Efficiency Metrics

–

- What Defines a Data Centre Facility?

- Standards Landscape: Uptime, TIA-942, ISO/IEC 22237

- Energy Efficiency Metrics: PUE, WUE, CUE

- Cooling Architectures: When Air Stops Scaling

- Power Architectures & Redundancy Choices

- Lighting Systems Inside Data Centre Facilities

- Site Selection, Permits & Grid Pressure

- Sustainability Roadmap for Data Centre Facilities

- Frequently Asked Questions (FAQ)

Key Takeaways

| Feature or Topic | Summary |

|---|---|

| Definition | A facility is the physical infrastructure (power, cooling, lighting, safety) distinct from IT systems. |

| Standards | Uptime Tiers, TIA-942-C, ISO/IEC 22237, EN 50600, ASHRAE 90.4 guide facility design & operation. |

| Efficiency Targets | Global average PUE = 1.56 (2024). Modern designs achieve 1.3 or lower. |

| AI & Cooling | High-density racks (30–60kW) require liquid cooling and ORv3 support. |

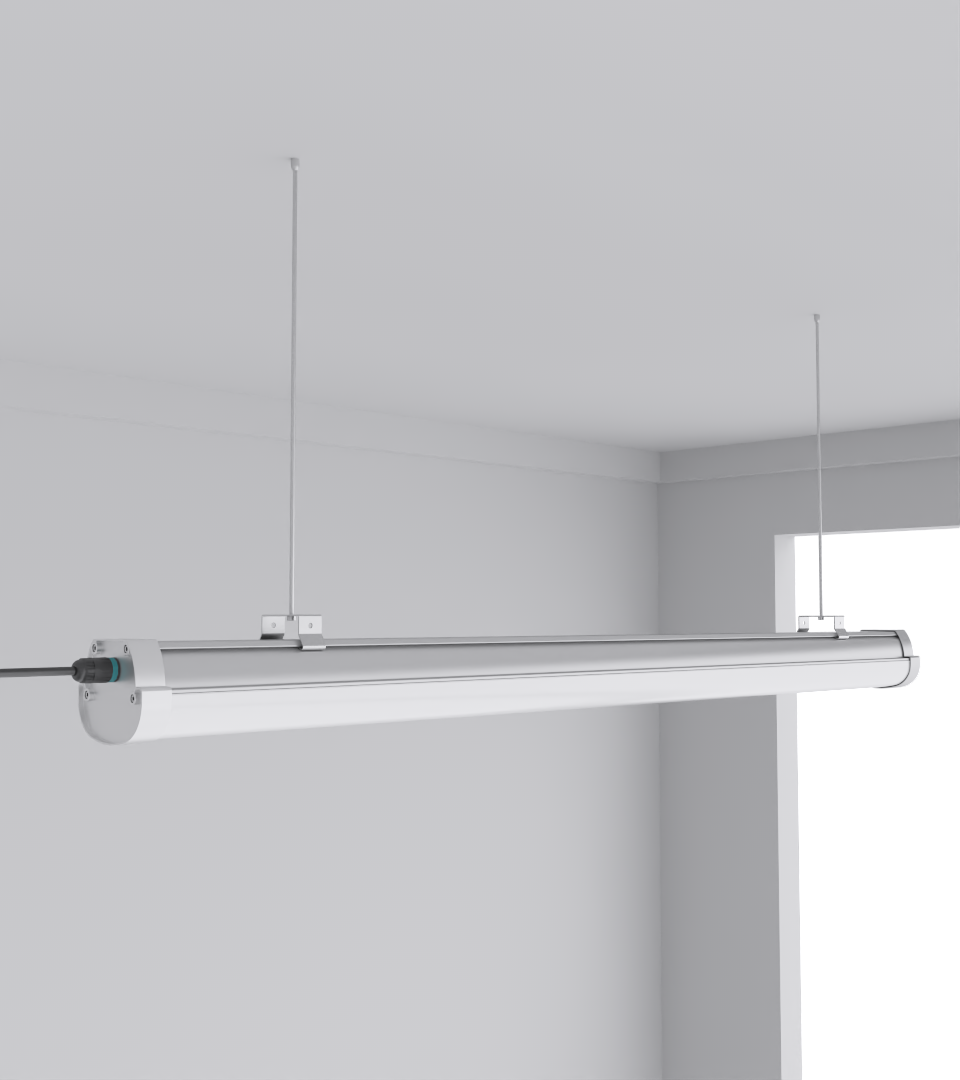

| Lighting | PoE LED lighting, emergency egress, and motion sensors support safety and energy goals. |

1. What Defines a Data Centre Facility?

A data centre facility is a layered physical infrastructure delivering continuous power, stable cooling, connectivity, fire protection, and security. It supports IT but is distinct from IT itself. Operators who ignore the facility layer risk downtime, regardless of server resilience.

2. Standards Landscape: Uptime, TIA-942, ISO/IEC 22237

Facility design and operation align with standards: Uptime Institute Tiers, TIA-942-C, ISO/IEC 22237, and BICSI 002. These frameworks govern redundancy, telecom design, efficiency, and safety.

3. Energy Efficiency Metrics: PUE, WUE, CUE

PUE (Power Usage Effectiveness) averages 1.56 globally (2024). Best-in-class facilities reach 1.2–1.3. WUE (Water Usage Effectiveness) measures liters per IT kWh, while CUE (Carbon Usage Effectiveness) tracks emissions. Combined, they define sustainable facility operations.

4. Cooling Architectures: When Air Stops Scaling

Traditional air cooling (CRAC/CRAH, containment) struggles above 30–40kW racks. Direct-to-chip and immersion liquid cooling provide the necessary efficiency and reliability for AI-era density.

5. Power Architectures & Redundancy Choices

Common redundancy options include N, N+1, 2N, and 2(N+1). Battery technologies vary: VRLA, Li-ion, and Nickel-Zinc. Proper grounding, switchgear design, and UPS integration are non-negotiable for safety.

6. Lighting Systems Inside Data Centre Facilities

Lighting includes task, emergency egress, and PoE-based LED systems. Fixtures such as SeamLine Batten and Squarebeam Elite ensure safety, efficiency, and integration with management systems.

7. Site Selection, Permits & Grid Pressure

Key factors include power grid interconnection, fiber access, water rights, and noise permits. Community acceptance is increasingly critical. Many large projects stall due to local environmental and permit constraints.

8. Sustainability Roadmap for Data Centre Facilities

Sustainability involves PUE, WUE, CUE, and heat reuse. Lighting systems like Quattro Triproof Batten and sensors lower idle consumption. District heat reuse and renewable PPAs further reduce environmental footprint.

Frequently Asked Questions (FAQ)

Q1: What’s the difference between a data centre and a data centre facility?

A data centre refers to IT + facility; a facility is the physical infrastructure.

Q2: What PUE should be targeted in 2025?

New builds aim for ≤1.3; retrofits typically reach 1.4–1.6.

Q3: Is liquid cooling mandatory for AI racks?

Yes, racks above 30–40kW require liquid cooling.

Q4: Which standards are most important?

Uptime Institute Tiers, TIA-942-C, ISO/IEC 22237, EN 50600, ASHRAE 90.4, NFPA 75.

Q5: How does lighting fit into data centres?

Lighting ensures safety and efficiency. PoE LED systems cut cabling and integrate with DCIM/BMS systems.