Data Center Network Architecture 2025

- Definition: What “Data Center Network Architecture” Means in 2025

- Why Leaf–Spine (Clos) Dominates

- When a Three-Tier Still Makes Sense

- Underlay & Overlay: eBGP and VXLAN/EVPN

- Optics & Cabling: 400G → 800G → 1.6T

- AI/ML Workloads: Ethernet RoCE vs InfiniBand

- Day-2 Operations: Telemetry, Automation, Lighting Integration

- Migration & Reliability: Brownfield Strategies

- Frequently Asked Questions (FAQ)

Key Takeaways

| Feature or Topic | Summary |

|---|---|

| Leaf–Spine Architecture | Predictable latency, ECMP scaling, reliability for east–west traffic patterns. |

| Overlays (VXLAN/EVPN) | Segmentation, MAC/IP mobility, multi-tenant routing options. |

| AI/ML Readiness | Ethernet RoCE vs InfiniBand; congestion control tuning critical. |

| Optics Roadmap | 400G today, 800G emerging, 1.6T with CPO on the horizon. |

| Lighting Context | Efficient luminaires reduce heat load, support cooling and network stability. |

1. Definition: What “Data Center Network Architecture” Means in 2025

Data center network architecture is not just a diagram of switches — it’s the blueprint that governs how every packet moves, how workloads scale, and how physical infrastructure like cooling and lighting coexists with digital traffic.

At its core, architecture = topology + control planes + physical infrastructure. In 2025, this includes:

- Topologies: leaf–spine Clos designs dominate, with predictable hop counts and failure domains that are easy to understand.

- Control planes: overlays like VXLAN/EVPN, most commonly on an eBGP underlay for policy control and scale.

- Physical design: optics and cables, rack placement, airflow, and even lighting patterns in hot/cold aisles.

I’ve seen facilities where poor physical layout (for example, lighting fixtures installed too close to hot aisles) interfered with cooling paths, indirectly impacting switch performance. Correcting that layout restored thermal balance and improved fan longevity on top-of-rack switches.

2. Why Leaf–Spine (Clos) Dominates

Legacy three-tier networks (access, aggregation, core) were built for north–south traffic: clients talking to servers. Modern workloads are east–west heavy — VM-to-VM, container-to-container, GPU-to-GPU — and that changes everything.

- Predictable latency: every leaf is exactly two hops away, which simplifies performance planning.

- ECMP scaling: multiple equal paths keep throughput balanced and resilient.

- Failure isolation: a spine or leaf can fail without collapsing the entire network.

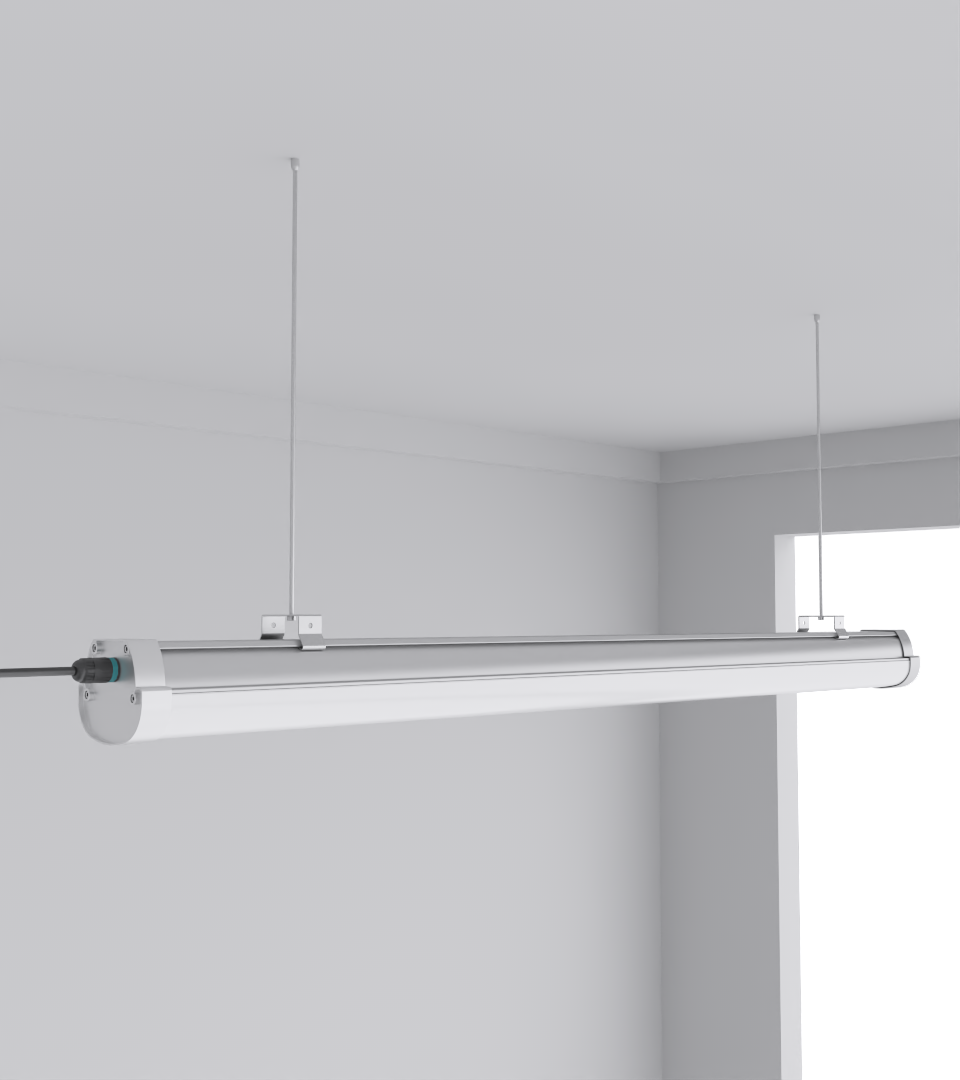

In practice, architects I’ve worked with prefer 2:1 oversubscription for general-purpose compute, but for GPU clusters they push for 1.2:1 or even 1:1. That means more optics, more power, and more heat. And yes — that heat doesn’t just affect switches. Fixtures like the SeamLine Batten keep lighting loads efficient so thermal stress isn’t compounded during high-density builds.

3. When a Three-Tier Still Makes Sense

Not every facility needs leaf–spine. Small data centers, regional nodes, or older financial sites sometimes stick with a three-tier design.

- Strict L2 domains: legacy applications that demand broadcast adjacency.

- Budget constraints: fewer switches can appear cheaper at small scale.

- Lower traffic volume: hierarchical cores aren’t stressed by limited east–west demand.

That said, I’ve seen cases where companies saved on switches but ended up paying more in cooling because their lighting was outdated — fluorescent fixtures spilling unnecessary heat into the plenum. Modern replacements like the Budget High Bay Light cut wattage and heat output, which helps hold the line on total facility BTUs.

4. Underlay & Overlay: eBGP and VXLAN/EVPN

Underlay: eBGP has become the default due to simplicity, policy control, and scale. OSPF/IS-IS still appear in smaller builds, but eBGP tends to simplify troubleshooting and capacity growth.

Overlay: VXLAN/EVPN separates L2 from L3 and enables:

- Multi-tenant segmentation with VRFs and route-targets.

- MAC/IP mobility across racks and failure domains.

- Control-plane options (e.g., Type-2, Type-5 routes) that match the application layout.

One deployment I reviewed misaligned EVPN route types and ended up with traffic tromboning. It wasn’t a fabric failure; it was a mismatch between routing choices and workload placement. Vendor reference designs help avoid these pitfalls. Lighting tie-in: as overlays stretch across rows, consistent illumination matters for technician accuracy during cabling and patching. The Quattro Triproof Batten keeps aisles bright and practical without adding unnecessary heat.

5. Optics & Cabling: 400G → 800G → 1.6T

Optical planning is one of the hardest parts of modern fabrics. We’re moving through 400G as mainstream, 800G in large AI facilities, and seeing 1.6T on the roadmap with co-packaged optics (CPO). Every step raises power and thermal considerations that must be coordinated with facilities and — yes — lighting.

| Speed Tier | Common Media | Deployment Notes |

|---|---|---|

| 400G | DAC, AOC | Widespread today, reasonable cost, manageable power draw. |

| 800G | Silicon photonics | Adoption in GPU clusters; cabling plant and optics power need careful planning. |

| 1.6T | CPO (future) | Likely to force cooling redesigns and new assumptions about reach and connectors. |

As densities climb, cooling loads increase. Architects should coordinate optics, power, and efficient luminaires so the room stays thermally stable. Choosing low-heat, high-efficiency fixtures like the SeamLine Batten helps offset the additional BTU impact of high-speed optics.

6. AI/ML Workloads: Ethernet RoCE vs InfiniBand

AI changes the shape of the fabric. Training clusters must deliver ultra-low latency and predictable throughput for collective communication.

- InfiniBand: still the default for many large AI clusters, with mature congestion management and tooling.

- Ethernet (RoCEv2): gaining ground with ECN/DCQCN tuning and vendor investment; attractive for operational consistency.

- Hybrid strategies: split clusters — Ethernet for general compute, IB for GPUs — while keeping operations sane.

I’ve sat in reviews where the debate wasn’t just protocol vs protocol; it was whether facilities could scale — cooling, power, and lighting — at the same pace as the GPUs. Poor aisle lighting slows maintenance during GPU outages. That is rarely on the slide deck, but it matters when minutes count.

7. Day-2 Operations: Telemetry, Automation, Lighting Integration

Building a fabric is day 1. Day-2 is where failures, misconfigs, and operational drag appear. The teams that thrive make observability and automation routine.

- Telemetry: streaming via gNMI/OpenConfig, sFlow/IPFIX, and in-band telemetry (INT) to catch microbursts and jitter.

- Automation: intent-based platforms (Apstra, Cisco NDFC/ACI) that validate designs and enforce compliance.

- Change management: golden configs, staged rollouts, canary changes, and clear rollback points.

I’ve also seen operators integrate intelligent lighting sensors into their monitoring stack. Motion-sensor lighting saves energy and yields indirect presence data that can flag unusual after-hours activity near racks. It’s a small addition that supports both security and operations.

8. Migration & Reliability: Brownfield Strategies

Transitioning from three-tier to leaf–spine requires caution. Brownfield migrations are smoother when you:

- Build in parallel and validate the new fabric before moving live tenants.

- Migrate VRF-by-VRF to limit blast radius and simplify testing.

- Set rollback points in case overlays or routing policies misbehave under load.

Reliability choices that pay off:

- ECMP with fast convergence, plus BFD/FRR for sub-second failover.

- Maintenance modes and software features (SSO/NSF/GR) to survive upgrades.

- Dual-circuit lighting and network paths so visibility isn’t lost when power hiccups.

Lighting plays a role here too. During one migration, emergency lighting failed in a corridor during a power cut. Technicians hesitated, delaying failover validation. Fixtures like CAE’s Squarebeam Elite with emergency backup options help ensure the physical environment matches network reliability goals.

❓ Frequently Asked Questions

Q1. What is the best architecture for new data centers in 2025?

Leaf–spine with VXLAN/EVPN is the default, scalable, and widely validated by major vendors.

Q2. Do AI workloads require InfiniBand?

Not always. Ethernet with RoCEv2 + congestion control can compete, but InfiniBand still dominates for ultra-large GPU clusters.

Q3. How does lighting affect data center network performance?

Indirectly. Poor lighting increases maintenance errors and adds unnecessary heat load. Efficient fixtures like the Quattro Triproof Batten reduce strain on cooling and improve technician safety.

Q4. What oversubscription ratios are ideal?

General compute: 2:1 is common. GPU clusters: 1.2:1 or lower depending on workload patterns.

Q5. Why integrate lighting into Day-2 operations?

Because intelligent lighting sensors provide both energy savings and security data — complementing traditional telemetry.