Data Center Network Limitations Explained: Performance, Scalability, and Design Best Practices

–

- What a Data Center Network Really Is

- Architectures and Topologies

- Bandwidth, Latency, and Throughput Limits

- Power and Cooling Constraints

- Redundancy and Fault Tolerance

- Standards, Metrics, and Compliance

- Emerging Technologies for Network Constraints

- Planning, ROI, and Future Outlook

- Frequently Asked Questions (FAQ)

Key Takeaways

| Question | Quick Answer |

|---|---|

| What is a data center network? | A structured system of switches, routers, servers, and interconnects handling traffic inside and outside the facility. |

| What are the limits? | Bandwidth bottlenecks, latency, scalability, power, cooling, physical space, and cost. |

| How do designs overcome limits? | Using spine-leaf topologies, redundancy strategies (N+1, 2N), high-speed optics, and efficient cabling. |

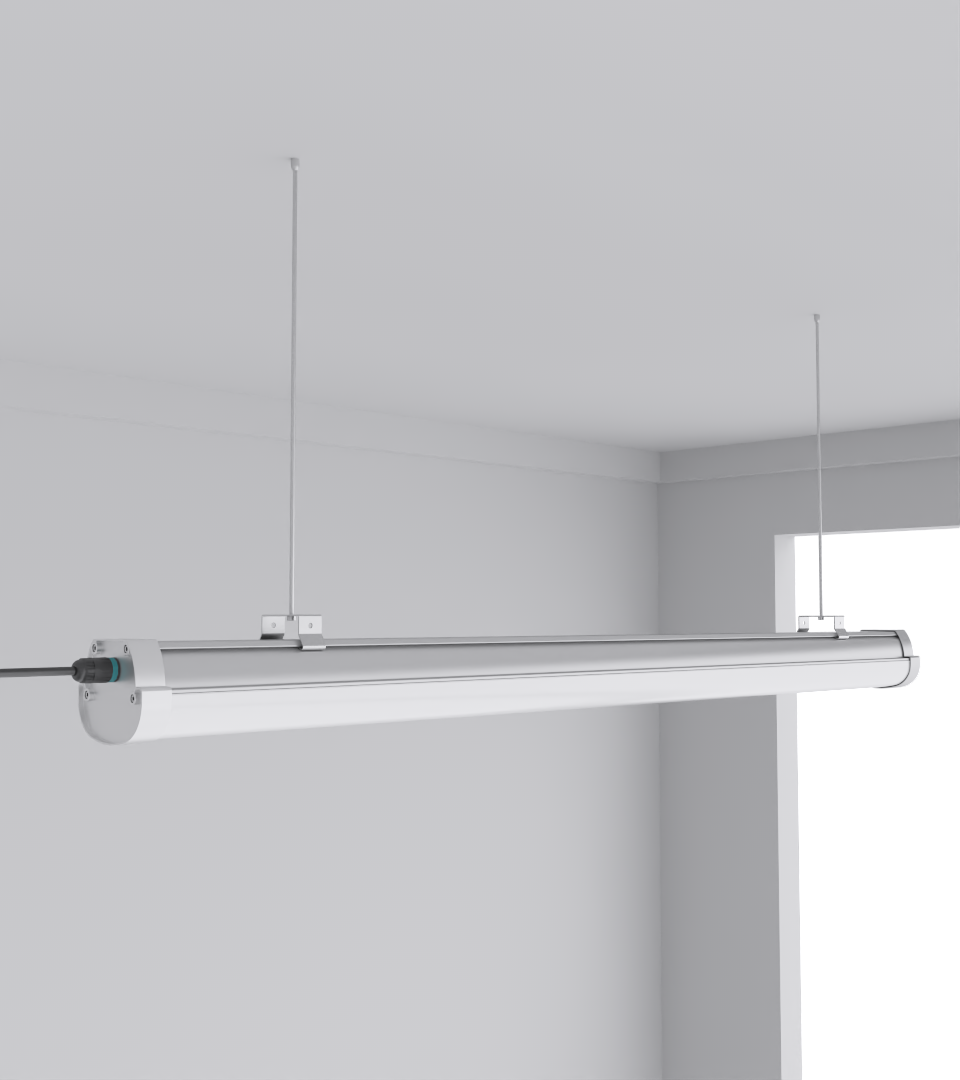

| Which products are relevant? | Industrial LED fixtures like SquareBeam Elite and Quattro Triproof Batten that address energy and cooling impacts. |

| What standards apply? | Uptime Institute tiers, TIA-942, ISO certifications, and power usage metrics like PUE. |

| Who needs to know this? | Data center architects, facility managers, IT operations teams, and contractors planning infrastructure upgrades. |

1. What a Data Center Network Really Is

A data center network is more than just cabling and switches. It’s the bloodstream of the facility, where traffic flows east-west (between servers) and north-south (to outside systems). At its simplest, it links servers, storage, and compute. But scale adds pressure: thousands of racks, millions of sessions, and constant uptime demands.

2. Architectures and Topologies

Data center networks use different topologies:

- Spine-leaf: predictable latency, scalable.

- Fat-tree/Clos: balanced traffic but cabling-heavy.

- Legacy 3-tier: more bottlenecks, less efficient.

3. Bandwidth, Latency, and Throughput Limits

Why do networks feel “limited”? Because oversubscription ratios are often pushed too far. Latency sources include:

- Serialization delay at NICs

- Switch hops

- Distance across fiber links

4. Power and Cooling Constraints

Networking gear consumes power and generates heat. In one project, switches accounted for 12% of total rack load. Cooling wasn’t sized for this. Solutions include:

- Cold aisle containment

- Energy-efficient LED fixtures

- Better airflow and cable tray management

5. Redundancy and Fault Tolerance

Uptime Institute Tiers define redundancy levels:

- Tier I: single path, no redundancy

- Tier III: N+1 redundancy

- Tier IV: 2N+1, fully fault tolerant

6. Standards, Metrics, and Compliance

Important benchmarks include:

- Latency: sub-1 ms for east-west traffic

- PUE (Power Usage Effectiveness)

- TIA-942 cabling standards

- ISO certifications for quality and safety

7. Emerging Technologies for Network Constraints

New technologies help reduce constraints:

- SDN for traffic engineering

- 400G/800G optical interconnects

- Photonic switching

8. Planning, ROI, and Future Outlook

Checklist for constrained network planning:

- Define workload growth for 3–5 years

- Map redundancy model

- Size cooling and power for network gear

- Integrate energy-efficient lighting

Frequently Asked Questions (FAQ)

Q1: What does “network limited” mean in a data center?

It refers to performance or design constraints — bandwidth, latency, cooling, space, or cost — that cap what the network can handle.

Q2: How do you reduce latency in a data center network?

Use spine-leaf topologies, minimize switch hops, and deploy high-speed optics.

Q3: What role does lighting play in network limits?

Efficient fixtures reduce heat load, easing cooling pressure that also affects networking gear.

Q4: Which standards matter for data center networks?

Uptime Institute Tiers, TIA-942 cabling, ISO certifications, and metrics like latency and PUE.

Q5: What’s the most common mistake in constrained designs?

Ignoring future east-west traffic growth. Networks often fail not from lack of bandwidth, but from poor scalability planning.