Data Center Networking in Computer Networks: Architectures, Metrics, and AI-Driven Demands Explained

- Introduction: Why Data Center Networking Matters

- Core Components of Data Center Networking

- Topologies & Architectures: Choosing the Right Fabric

- Traffic Patterns: East-West vs North-South

- Performance Metrics & Benchmarking

- Emerging Technologies in Data Center Networking

- Designing for AI & High-Density Workloads

- Security, Reliability & Sustainability in Data Center Networking

- Frequently Asked Questions (FAQ)

Key Takeaways

| Aspect | Summary |

|---|---|

| Definition | Data center networking connects servers, storage, and applications using structured topologies like spine-leaf and Clos. |

| Core Components | Switches, routers, fiber cabling, optical interconnects, and intelligent lighting for efficiency and safety. |

| Topologies | Traditional 3-tier, spine-leaf, fat-tree, and disaggregated designs — each with unique trade-offs in latency and scalability. |

| AI/High-Density Impact | AI workloads demand ultra-low latency, high throughput, and dense rack interconnects. |

| Performance Metrics | Bandwidth, latency, bisection bandwidth, jitter, oversubscription ratios. |

| Security | Zero trust, micro-segmentation, encryption, firmware integrity. |

| Sustainability | Energy efficiency, cooling, and lighting solutions reduce operational costs and environmental footprint. |

| CAE Lighting Role | Provides specialized SquareBeam Elite and Quattro Triproof Batten lighting to support secure, efficient data center environments. |

1. Introduction: Why Data Center Networking Matters

Data center networking is more than plugging servers into switches. It defines how workloads travel between racks, how quickly AI clusters exchange gradients, and whether a facility can sustain 99.999% uptime.

In my own experience working on a hyperscale retrofit in Malaysia, poor topology planning meant the cooling system had to fight harder against hotspots. Lighting design also affected technician safety during overnight maintenance. These two seemingly unrelated systems — networking and lighting — intersected in real-world operations.

- North-south traffic (client ↔ server) still matters, but east-west dominates.

- AI workloads have shifted bandwidth expectations.

- Energy constraints mean efficiency is no longer optional.

2. Core Components of Data Center Networking

Networking in data centers is a layered system with each device type playing a role:

- Access switches connect servers.

- Aggregation/spine switches handle high-volume traffic.

- Routers connect to WAN/Internet.

- Optical interconnects carry east-west flows over fiber.

| Layer | Devices | Notes |

|---|---|---|

| Access | Top-of-rack switches | Low latency, high port density |

| Spine | Modular chassis / fixed 400G switches | Central to scalability |

| Interconnect | Fiber, optics, transceivers | Latency-sensitive |

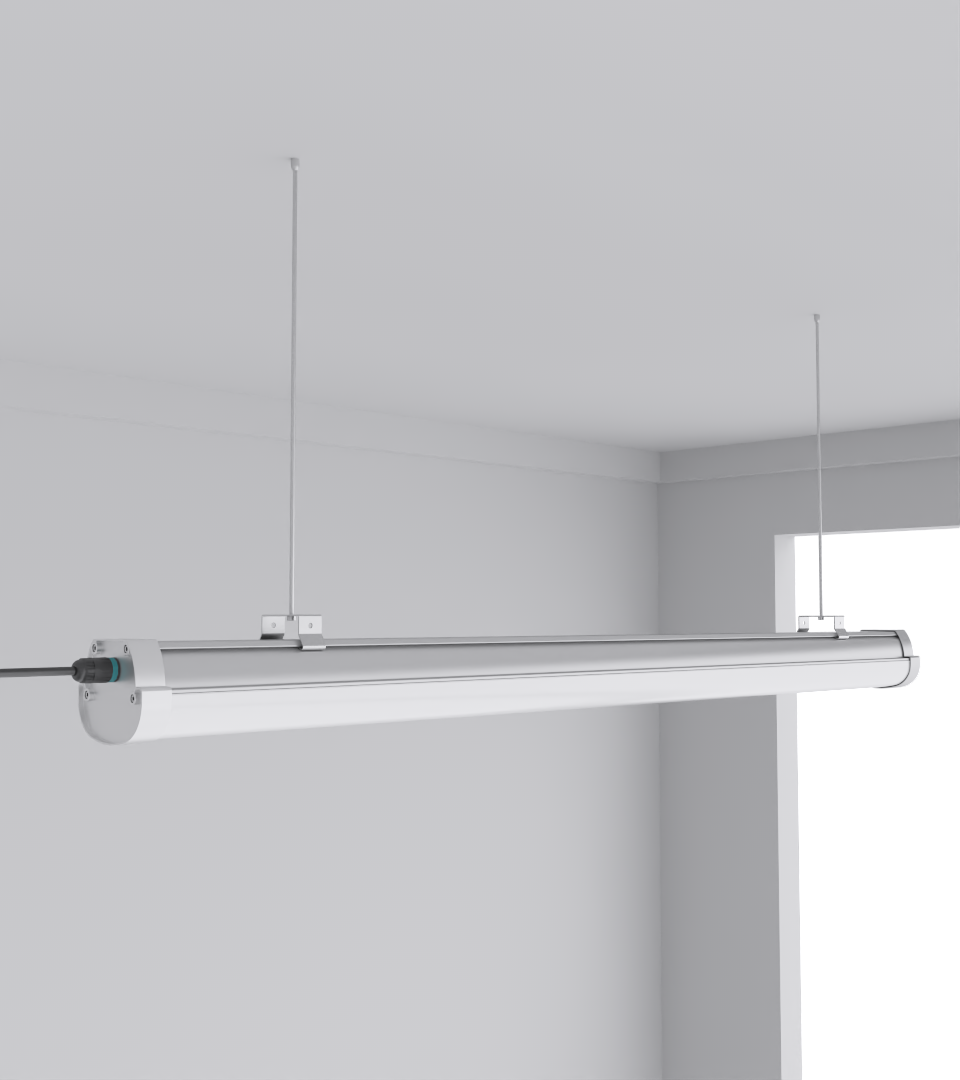

| Lighting | SeamLine Batten | Improves visibility, reduces downtime |

3. Topologies & Architectures: Choosing the Right Fabric

Common Topologies

- 3-Tier (Access → Aggregation → Core): Simple, but limited scalability.

- Spine-Leaf / Clos: Most common in modern DCs, supports predictable latency.

- Fat-Tree & BCube: Academic models adapted for scale-out clusters.

- Disaggregated Fabrics: Separate compute, storage, and networking into independent resource pools.

The Quattro Triproof Batten is often deployed in pod-based DC designs where ceiling heights are low. A lighting miscalculation in such pods once forced us to rewire cable trays because glare caused difficulty reading fiber labels.

4. Traffic Patterns: East-West vs North-South

Modern applications (microservices, Kubernetes pods, AI training jobs) create east-west heavy traffic. Unlike the client-server north-south model, east-west requires:

- Low latency spine fabrics.

- High oversubscription tolerance.

- Buffering strategies for microbursts.

Expert Note: In one edge DC project, technicians accidentally routed monitoring traffic through production uplinks. It looked minor — just 2 Gbps — but during a failover event, those monitoring packets increased congestion latency by 15%. Small oversights cascade into downtime.

5. Performance Metrics & Benchmarking

A network’s quality is only as strong as the metrics it maintains.

Key Metrics

- Throughput (Gbps/Tbps)

- Latency (µs range in AI fabrics)

- Jitter (variance in latency)

- Bisection bandwidth (critical for distributed AI training)

- Oversubscription ratios (e.g., 3:1 vs 5:1)

| Metric | Acceptable Range | Notes |

|---|---|---|

| Latency | < 10 µs (intra-rack) | Training clusters need consistency |

| Jitter | < 1 µs | Bursty workloads punish higher jitter |

| Bisection BW | 100% ideally | Ensures even east-west distribution |

Tools Used

- DCNetBench for simulation.

- Real traffic replay in staging labs.

- Telemetry baked into switches.

6. Emerging Technologies in Data Center Networking

- 800G Ethernet is entering deployments.

- Co-packaged optics (CPO) reduce latency and energy per bit.

- SmartNICs and DPUs offload tasks.

- Intent-based networking allows policy-driven fabrics.

AI-Specific Demands

- AI accelerators require RDMA (Remote Direct Memory Access) for speed.

- UALink consortium is pushing interconnect standards.

- Optical fabrics handle 50–100 Tbps rack-to-rack.

7. Designing for AI & High-Density Workloads

AI clusters change everything:

- Training a GPT-level model requires hundreds of Gbps per node.

- Racks pull >30 kW, requiring precision cooling.

- Latency under 5 µs is often the goal.

Checklist for AI Networking

- RDMA support in NICs.

- Non-blocking spine-leaf fabric.

- Sufficient bisection bandwidth.

- Thermal planning alongside optics.

Personal anecdote: during a Thai data center deployment, we underestimated rack power density by 20%. The network gear could handle it, but cooling + lighting integration became the bottleneck.

8. Security, Reliability & Sustainability in Data Center Networking

Security Practices

- Zero Trust Architecture for east-west flows.

- Encryption in transit with MACsec/TLS.

- Micro-segmentation reduces lateral movement.

Reliability

- Redundant uplinks and power feeds.

- MTBF analysis for switches.

- Predictive monitoring with telemetry AI.

Sustainability

- Networking consumes 15–20% of DC power budgets.

- Efficient ASICs + optical fabrics reduce per-bit energy.

- Lighting choices like SquareBeam Elite lower heat load on HVAC systems.

Frequently Asked Questions (FAQ)

Q1. What is the difference between east-west and north-south traffic?

East-west is server-to-server inside the data center, while north-south is client-server traffic leaving or entering the facility.

Q2. Which topology is best for AI workloads?

Spine-leaf or Clos, due to predictable latency and high bisection bandwidth.

Q3. Why does lighting matter in data center networking?

Safe, glare-free lighting reduces technician errors during cable patching and hardware replacement.

Q4. What role does CAE Lighting play in data center networking?

By supplying efficient luminaires like the Quattro Triproof Batten, CAE supports operational safety and reduces energy consumption in mission-critical facilities.

Q5. What’s the next big technology in data center networking?

Co-packaged optics (CPO) and AI-specific fabrics such as UALink will dominate by late 2020s.