Data Center Power and Cooling: Technical Guide to Efficiency, Redundancy, and Emerging Trends

–

- The Role of Power and Cooling in Modern Data Centers

- Power Infrastructure Fundamentals

- Cooling Technologies: Air, Liquid, and Hybrid Systems

- Best Practices in Facility Layout and Airflow Management

- Metrics and Monitoring: PUE, DCiE, CUE, and WUE

- Sustainability and Environmental Considerations

- Emerging Trends in 2025 and Beyond

- Cost, ROI, and Case Studies

- Frequently Asked Questions (FAQ)

Key Takeaways

| Question | Short Answer |

|---|---|

| What is PUE in data centers? | Power Usage Effectiveness (PUE) measures energy efficiency; <1.5 is considered strong. |

| What are the main cooling methods? | Air cooling, free cooling, direct liquid cooling, and immersion systems. |

| How much of a data center’s energy is cooling? | Typically 30–40% of total power is used for cooling infrastructure. |

| How do high-density AI workloads affect cooling? | They increase rack thermal loads, often requiring liquid or hybrid cooling. |

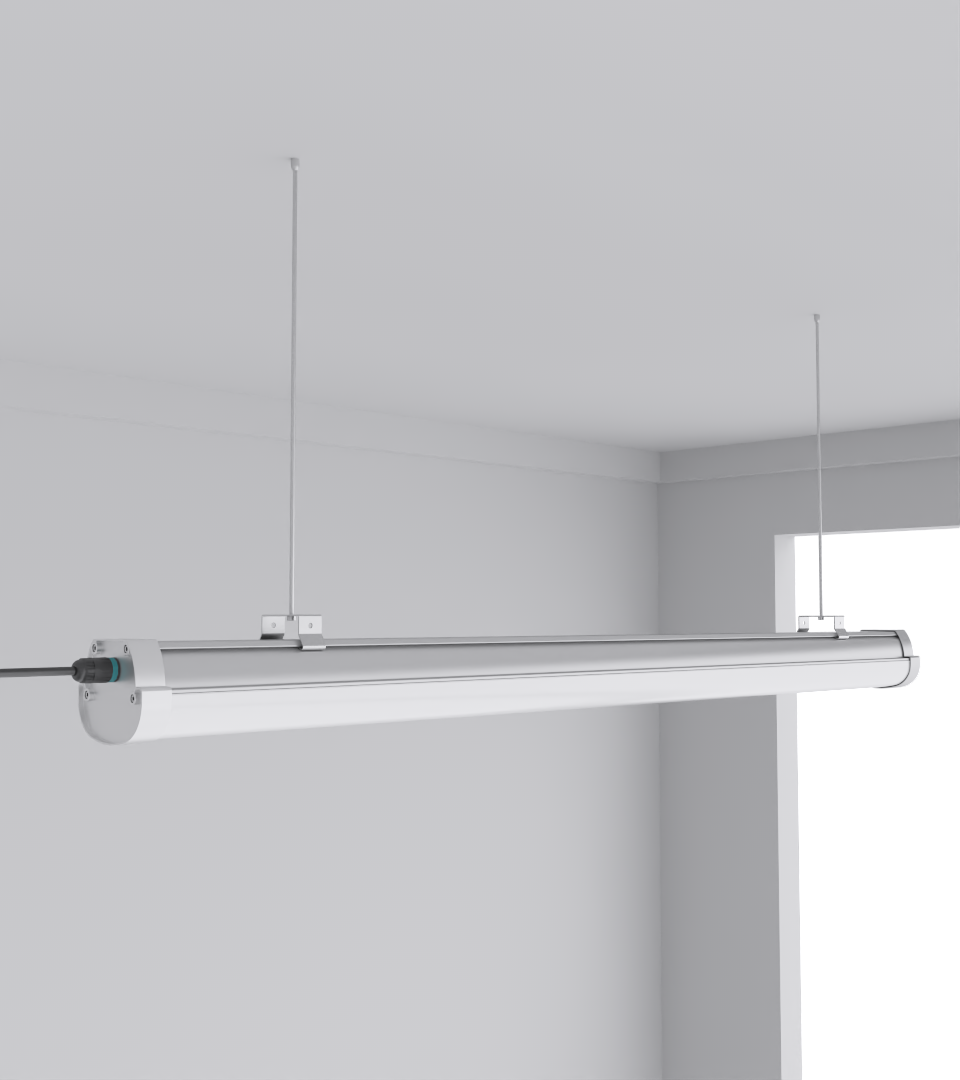

| What role does lighting play in power & cooling? | Low-heat, efficient LED fixtures reduce unnecessary cooling demand. |

| How to ensure redundancy? | N+1 or 2N architectures for both power and cooling prevent downtime. |

| What’s the biggest trend in 2025? | Immersion cooling adoption for GPU-dense racks and integration with renewables. |

1. The Role of Power and Cooling in Modern Data Centers

Every modern data center is balanced on two lifelines: uninterrupted power delivery and effective cooling. Without them, servers fail, uptime agreements collapse, and hardware lifespans shrink dramatically. Operators know that even small inefficiencies cascade into massive operating costs.

- Power systems include: utility feeds, UPS, backup generators, PDUs, and distribution lines.

- Cooling systems include: air-based CRAC/CRAH units, chilled water systems, containment layouts, and increasingly liquid or immersion cooling.

In Guangdong, one project I oversaw had racks rated at 12 kW each, but cooling was misaligned. Result: constant thermal alarms. After redesigning the airflow containment, energy bills dropped 18%.

2. Power Infrastructure Fundamentals

Data centers demand consistent, clean, and redundant power. Failures here cascade instantly.

- Utility Supply & Grid: Dual feeds preferred, especially in regions with unstable grids.

- UPS (Uninterruptible Power Supply): Double-conversion UPS ensures clean sine wave output.

- Backup Generators: Diesel is still dominant, but natural gas and dual-fuel systems are emerging.

- PDUs and Distribution: Rack PDUs now support outlet-level monitoring for load balancing.

3. Cooling Technologies: Air, Liquid, and Hybrid Systems

Cooling consumes one-third or more of a data center’s total electricity. Choosing the wrong system can lock operators into excessive OPEX for decades.

- Air Cooling: CRAC/CRAH units, aisle containment, raised floors.

- Liquid Cooling: Direct-to-chip cold plates, rear-door heat exchangers.

- Immersion Cooling: Servers submerged in dielectric fluid, ideal for AI/ML racks.

- Hybrid: Air + liquid combinations for staged thermal management.

4. Best Practices in Facility Layout and Airflow Management

Data centers live or die by airflow. I’ve seen facilities with top-tier CRACs but no containment, wasting thousands monthly.

- Hot aisle / cold aisle containment prevents mixing of exhaust and intake air.

- Blanking panels eliminate hot spots in unused rack space.

- Raised floor design improves under-floor pressure distribution.

- Cable management avoids blocking airflow under floors and in overhead trays.

5. Metrics and Monitoring: PUE, DCiE, CUE, and WUE

You cannot optimize what you don’t measure. The most respected KPI is PUE (Power Usage Effectiveness).

- PUE = Total Facility Power ÷ IT Equipment Power

- <1.5 is good, <1.3 is exceptional.

- Other metrics: DCiE (inverse of PUE), CUE (Carbon Usage Effectiveness), WUE (Water Usage Effectiveness).

Facilities should deploy rack-level sensors for power draw and thermal imaging cameras for real-time hot spot monitoring.

6. Sustainability and Environmental Considerations

Operators are under pressure from regulators and clients to cut carbon and water usage. Cooling, especially evaporative towers, can be water-intensive.

- Waste heat reuse: feeding district heating networks.

- On-site renewables: pairing solar or wind with battery storage.

- Dry cooling in water-scarce regions.

- ISO 14001 certification for environmental management.

7. Emerging Trends in 2025 and Beyond

The acceleration of AI workloads is the single biggest disruptor in power and cooling. Racks are pushing beyond 40 kW density, forcing new approaches.

- Immersion Cooling Tanks for GPU clusters.

- Direct-to-chip liquid cooling for balanced density racks.

- AI-driven cooling optimization using machine learning.

- Microgrids integrating renewables with storage to stabilize utility fluctuations.

8. Cost, ROI, and Case Studies

Every decision in data center design balances CAPEX vs OPEX. Cutting corners often means higher lifetime costs.

- Hyperscale AI deployment: immersion cooling CAPEX was high but saved $3M annually in OPEX.

- Colocation in Bangkok: hot climate forced hybrid cooling; payback period = 4 years.

- European colocation: shifted to renewable-powered UPS with modular lithium batteries, cutting carbon reporting by 60%.

Frequently Asked Questions (FAQ)

Q1: What percentage of data center energy is used for cooling?

A: Typically 30–40%, though efficient designs can reduce it to ~25%.

Q2: What’s the best cooling method for high-density AI racks?

A: Direct-to-chip or immersion liquid cooling, as air systems cannot handle 40+ kW racks efficiently.

Q3: How often should UPS batteries be replaced?

A: Standard VRLA batteries last 3–5 years; lithium-ion can exceed 10 years.

Q4: What’s the difference between N+1 and 2N redundancy?

A: N+1 = one backup for every N components. 2N = full duplication, ensuring no single point of failure.

Q5: How does lighting affect data center cooling?

A: Low-heat, efficient fixtures like SeamLine Batten reduce thermal load, meaning less cooling energy is needed.