Data Center Space, Power & Cooling: Engineering Principles, Metrics, and Optimization Strategies

–

- Why Space, Power & Cooling Define Data Center Viability

- Space Planning: White Space, Layout & Lighting

- Power Infrastructure & Density Planning

- Cooling Technologies: From Air to Immersion

- Metrics: PUE, WUE & Beyond

- Thermal Management & Airflow Control

- Sustainability & Efficiency in Practice

- Future Trends: AI Loads, Liquid Cooling & Modular Growth

- Frequently Asked Questions (FAQ)

Key Takeaways

| Question | Short Answer |

|---|---|

| What are the three pillars of data center capacity? | Space, power, and cooling — each interdependent. |

| Why is cooling now critical? | High-density racks, AI workloads, and energy costs make thermal management a top priority. |

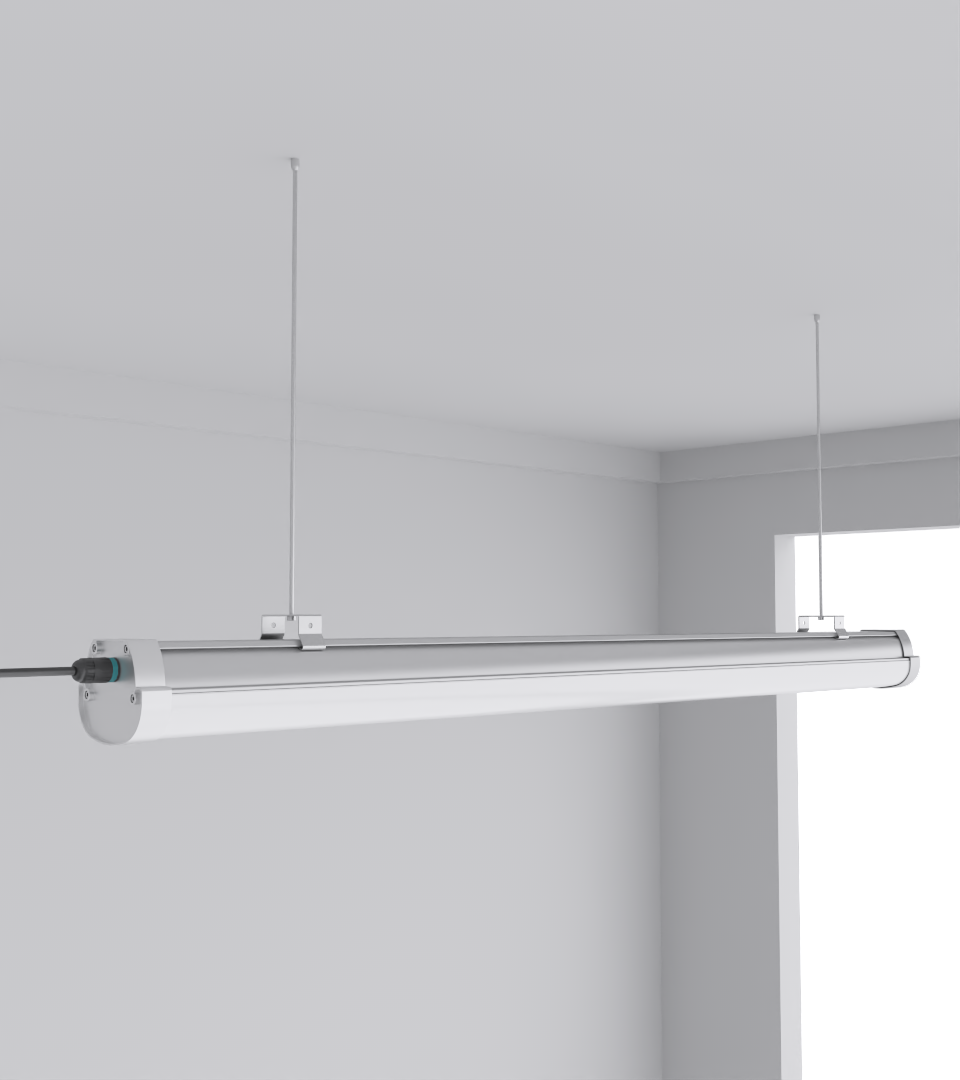

| How does lighting affect cooling? | Inefficient fixtures add unwanted heat load; CAE’s Squarebeam Elite and Quattro Triproof Batten reduce heat emissions. |

| What are standard metrics? | PUE (Power Usage Effectiveness), WUE (Water Usage Effectiveness), rack density (kW/rack). |

| What’s the future trend? | Liquid cooling, immersion systems, AI-driven controls, modular designs. |

| How do contractors plan upgrades? | Start with capacity audits, then evaluate power and cooling infrastructure, then integrate efficient luminaires. |

1. Why Space, Power & Cooling Define Data Center Viability

Data centers don’t fail because of one dramatic collapse — they slip when space is maxed out, when a UPS is overloaded, or when heat backs up into racks. I’ve seen facilities where lighting alone pushed local thermal loads up by 2–3°C, forcing cooling systems to overcompensate. That’s why contractors today pair efficient layouts with lighting like the SeamLine Batten  to avoid compounding cooling demand.

to avoid compounding cooling demand.

2. Space Planning: White Space, Layout & Lighting

A rack isn’t just a rectangle; it’s a heat emitter. Planning space means planning airflow corridors, containment, and lighting placement. Poorly placed luminaires block airflow or add to hotspots. In one retrofit, swapping out old fluorescents for Quattro Triproof Batten  reduced maintenance visits by 30% in a humid facility corridor.

reduced maintenance visits by 30% in a humid facility corridor.

3. Power Infrastructure & Density Planning

Power is never static. A rack that drew 5kW ten years ago may now pull 30kW with AI accelerators. Contractors size not just for today but for growth curves. In Malaysia, we had to redesign UPS distribution after a facility’s density doubled in under 18 months.

Lighting indirectly affects this too: CAE’s Budget High Bay Light  is engineered at 160 lm/W, minimizing electrical overhead so power budget remains on IT load.

is engineered at 160 lm/W, minimizing electrical overhead so power budget remains on IT load.

4. Cooling Technologies: From Air to Immersion

Cooling is no longer just CRAC units humming in the corner. Choices now split into air, liquid, and immersion cooling. Each comes with cost, efficiency, and risk tradeoffs. In Johor, we trialed immersion on 50kW racks; PUE dropped by 0.2, but water-side management became the bottleneck. Lighting factored too: Squarebeam Elite  emitted less radiant heat than legacy fixtures, improving local CFD models.

emitted less radiant heat than legacy fixtures, improving local CFD models.

5. Metrics: PUE, WUE & Beyond

Numbers drive credibility. PUE below 1.3 is considered high performance; anything above 1.6 usually signals inefficiencies. Cooling directly dictates these numbers, but so do lighting loads.

| Metric | What It Measures | Target Value |

|---|---|---|

| PUE | Power Usage Effectiveness | 1.2–1.4 |

| WUE | Water Usage Effectiveness | < 1.0 L/kWh |

| CUE | Carbon Usage Effectiveness | Facility-dependent |

6. Thermal Management & Airflow Control

Air moves strangely in confined IT spaces. Without CFD mapping, it’s easy to miss hot spots behind racks. Fixtures like the Simplitz Batten V3  are slimline enough not to obstruct ducts or cable trays.

are slimline enough not to obstruct ducts or cable trays.

7. Sustainability & Efficiency in Practice

Cooling guzzles both energy and water. Contractors now balance evaporative systems, closed-loop chillers, and heat reuse. In one Singapore retrofit, switching to efficient luminaires like the Squarebeam Elite freed enough cooling margin to allow partial free cooling.

8. Future Trends: AI Loads, Liquid Cooling & Modular Growth

AI racks at 50–100kW each will push cooling innovation. Expect immersion adoption, but also modular cooling pods and on-rack heat exchangers. Space will shrink, densities rise, and lighting fixtures must remain compact and low-heat. CAE’s SeamLine Batten already fits this trajectory.

FAQs

- How much of a data center’s power is used for cooling? Typically 30–40%, though high-efficiency sites drop this to ~25%.

- Does lighting really impact cooling loads? Yes — inefficient luminaires add heat, raising CRAC/CRAH demand.

- What’s the safe maximum rack density? Standard today is 10–20kW, but AI pushes up to 100kW/rack.

- Is immersion cooling the future? For HPC/AI, yes. For enterprise/colocation, hybrid air-liquid remains practical.

- How do contractors start a retrofit? Audit capacity (space/power/cooling), then replace heat-heavy infrastructure like old lights, then optimize cooling.