Data Center Cooling Infrastructure Explained: Air, Liquid, and Immersion Systems for High-Density Racks

- Baseline physics, then choices: air, liquid, or both

- Containment is the cheap hero (and the first audit)

- Liquid cooling in the real world: CDUs, quick disconnects, and nerves

- Controls that earn their keep: sensors, alarms, and mild obsession

- Air efficiency checklist: the boring bits that save big

- Liquid details that stop headaches: materials, maintenance, and micro-habits

- Emergency scenarios: power wobbles, smoke events, and human panic

- Procurement and retrofits: specify outcomes, pilot ruthlessly

- Frequently Asked Questions (FAQ)

Key Takeaways

| Topic | Quick Summary |

|---|---|

| Cooling approach | Air fine up to ~30kW/rack, liquid/immersion for AI density. |

| Containment | Seal leaks, manage tiles, >90% separation of hot/cold streams. |

| Liquid details | CDUs, quick disconnects, leak detection, safe coolants. |

| Controls | Sensors at rack inlets, tie into BMS/DCIM, automate setpoints. |

1) Baseline physics, then choices: air, liquid, or both

Air is lazy; it goes where pressure is easy, not where your servers beg for it. Typical air setups carry you comfortably to ~10–15 kW/rack, sometimes 30 kW if containment and ΔT are solid. Past that, direct-to-chip or immersion start making sense.

2) Containment is the cheap hero (and the first audit)

Hot-aisle or cold-aisle, pick one and finish it. Half-done containment does nothing. Aim for >90% hot/cold separation, or your fans will waste power.

3) Liquid cooling in the real world: CDUs, quick disconnects, and nerves

Liquid systems need respect. CDUs, leak detection, and quick disconnects are your best friends. Plan service areas carefully, and always train staff on de-airing loops.

4) Controls that earn their keep: sensors, alarms, and mild obsession

Telemetry is king. Rack-inlet sensors, ΔP monitors, and water temps need to feed into BMS/DCIM. Raise supply temps gradually and track results.

5) Air efficiency checklist: the boring bits that save big

Containment audit, tile map, bypass hunt, fan logic, and setpoint raises. Small fixes pay off quickly.

6) Liquid details that stop headaches: materials, maintenance, and micro-habits

Pick materials that don’t corrode. Swap filters early. Train two-person wet work. Label every loop clearly.

7) Emergency scenarios: power wobbles, smoke events, and human panic

Cooling inertia buys minutes, not miracles. Staff need clear playbooks. Lighting must support egress during smoke or outages.

8) Procurement and retrofits: specify outcomes, pilot ruthlessly

Write RFPs as checklists of measurable outcomes. Pilot upgrades in one pod before scaling. Fold lighting replacements into cooling maintenance windows.

FAQs

Q1: How do I know if air is “enough”?

If inlet temps stay within spec at 24 °C supply without fans maxed, you’re safe.

Q2: What’s the quickest retrofit that actually moves the needle?

Containment + sealing + tile shuffle.

Q3: Is immersion cooling overkill for most sites?

Often yes; direct-to-chip is usually enough unless density is extreme.

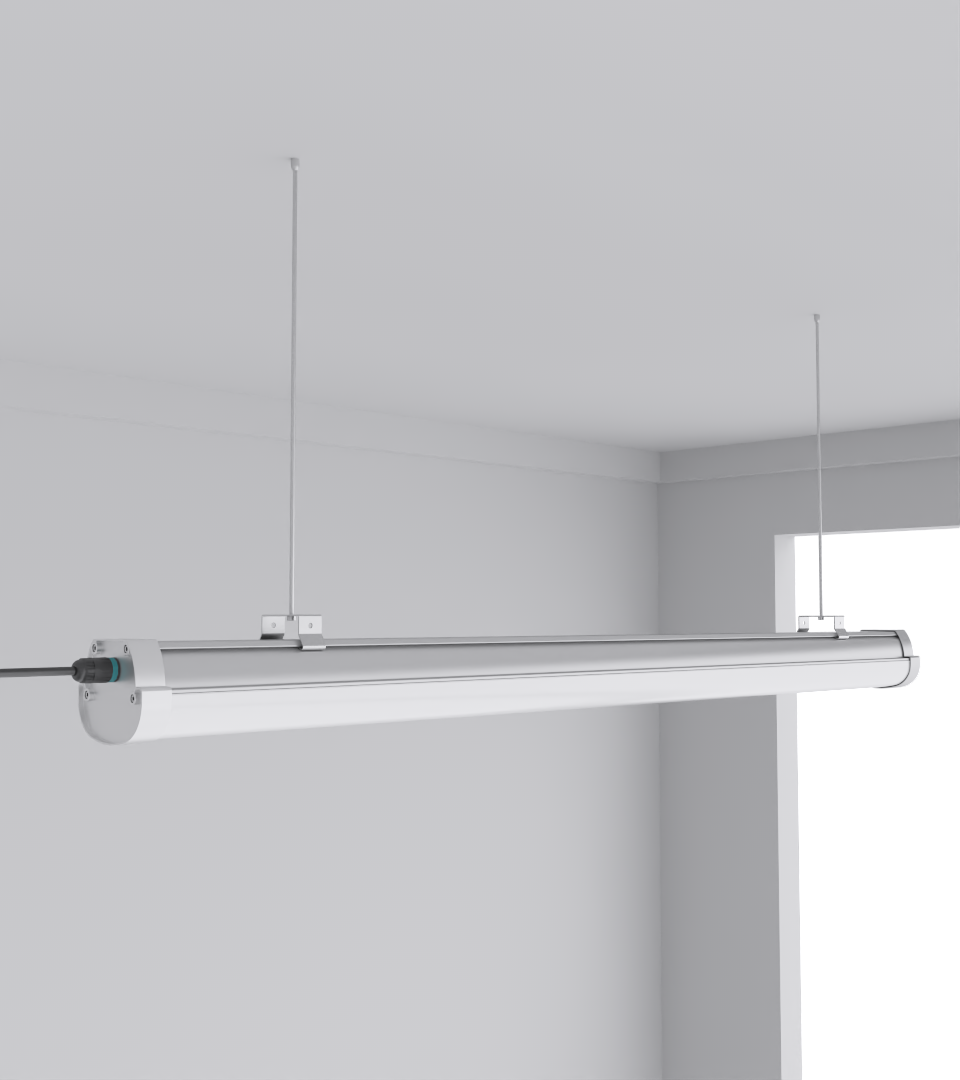

Q4: Do lighting changes really affect cooling?

Yes, glare and shadows cause mistakes. Use SeamLine Batten or Quattro Triproof Batten.

Q5: What should I demand in the SLA from cooling vendors?

Outcome metrics, recovery time, telemetry integration.