Data Center Network Architecture Best Practices: Proven Design Models, Scalability Strategies, and Zero-Trust Security Integration

–

- Modern Data Center Network Design Principles

- Choosing the Right Architecture Model

- Bandwidth & Latency Planning for AI and HPC

- Network Segmentation and Zero Trust

- High Availability & Redundancy Design

- Automation & Monitoring

- Energy Efficiency & Cooling Considerations

- Future-Proofing Your Network Architecture

- Frequently Asked Questions

Key Takeaways

| Question | Quick Answer |

|---|---|

| Most common design | Spine-leaf architecture for predictable low latency and scalable east-west traffic. |

| Minimizing downtime | Redundant uplinks, MLAG, dual-homed spines, and failover testing. |

| Multi-tenant protocols | EVPN with VXLAN overlays using a BGP control plane. |

| AI/HPC workloads | Plan for 400G+ uplinks, RoCEv2 support, and non-blocking fabrics. |

| Lighting in network planning | Use fixtures like Squarebeam Elite to avoid heat load and maintain visibility. |

| Energy efficiency | Low-power optics, modular gear, intelligent port power-down. |

| Security principle | Zero-trust segmentation with micro-segmentation at multiple layers. |

Modern Data Center Network Design Principles

A high-performing data center network has three non-negotiables: low latency, fault tolerance, and scalability. This means designing with predictable traffic paths, redundancy at multiple layers, and enough port capacity for future expansion.

- Low latency: Keep hops minimal and use high-performance optics.

- Fault tolerance: Dual power, dual uplinks, and geographically diverse backups.

- Scalability: Modular spine switches and leaf switches ready for bandwidth upgrades.

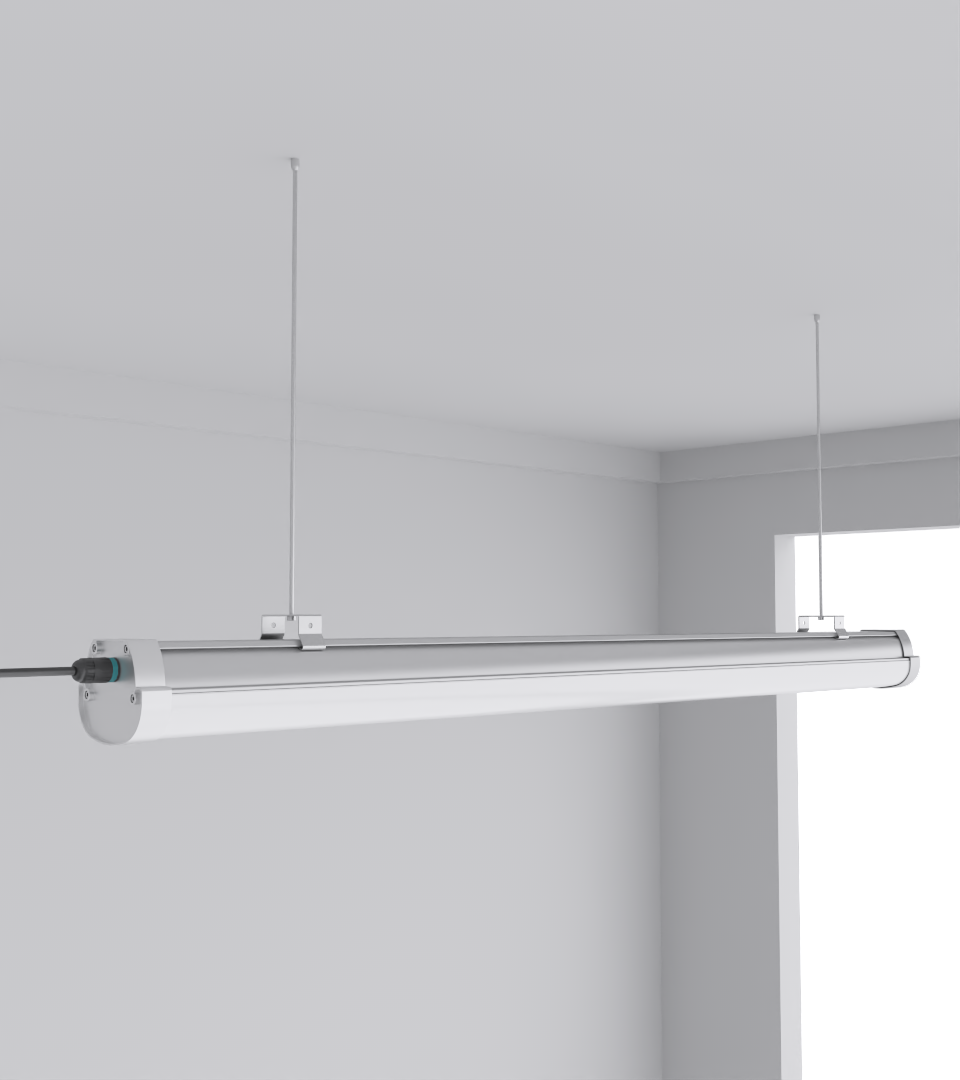

In physical build-outs, lighting like the Squarebeam Elite is often chosen for network rooms due to low heat emission and high lumen output, reducing cooling loads without sacrificing visibility.

Choosing the Right Architecture Model

Three primary approaches dominate modern deployments:

| Architecture | Pros | Cons |

|---|---|---|

| Spine-Leaf | Scalable, predictable latency | More cabling, higher initial cost |

| EVPN-VXLAN | Multi-tenant support, stretch L2 domains | Requires advanced routing skills |

| Hybrid | Balances legacy + modern | Complexity in O&M |

From my own deployments, hybrid is only worth it if you have existing three-tier assets you can’t phase out immediately. Otherwise, a pure spine-leaf with VXLAN overlays offers cleaner growth.

Bandwidth & Latency Planning for AI and HPC

High-density compute clusters demand non-blocking fabrics. This means:

- Plan for 400G/800G uplinks

- Use RoCEv2 for GPU clusters

- Keep ToR oversubscription under 1.5:1

Poor planning here leads to cascading failures when workloads spike. In one AI lab build, we had to re-pull half the fiber plant because early calculations ignored east-west training data volumes.

Network Segmentation and Zero Trust

Effective segmentation isn’t just VLANs anymore — it’s micro-segmentation using firewalls, ACLs, and hypervisor policies.

- Map all application flows.

- Place segmentation boundaries as close to workloads as possible.

- Enforce MFA and logging on all management planes.

Even in lighting zones, segment PoE lighting control networks from core compute — prevents lateral movement if IoT is compromised.

High Availability & Redundancy Design

A network without redundancy is a single point of failure waiting to happen.

- Dual-homed spines

- Redundant power feeds

- Multi-chassis link aggregation (MLAG)

CAE Lighting applies the same logic in lighting — dual circuits for emergency and operational lighting in network rooms ensure visibility during failovers.

Automation & Monitoring

Automation isn’t optional anymore. Use:

- Ansible for templated configs

- Terraform for infrastructure as code

- Streaming telemetry for real-time monitoring

CAE Lighting’s data center lighting guide details how even lighting control can be tied into network monitoring platforms for unified O&M.

Energy Efficiency & Cooling Considerations

Networking gear generates heat — and lighting can make it worse if poorly chosen. Reduce impact by:

- Using low-heat LED fixtures (Squarebeam Elite, Quattro Triproof Batten)

- Designing cold/hot aisle containment

- Locating lighting to avoid obstructing airflow

Future-Proofing Your Network Architecture

Plan for:

- Post-800G readiness

- Edge integration

- Quantum-safe encryption

- Liquid cooling in high-density zones

Lighting planning is part of it — if you need to swap rack layouts, modular fixtures like the SeamLine Batten allow rapid repositioning without rewiring.

Frequently Asked Questions

Q: How often should a data center network architecture be reviewed?

Every 18–24 months, or immediately after major technology adoption like AI workloads.

Q: Can lighting affect network performance?

Indirectly, yes — excess heat or poor placement can obstruct airflow, affecting cooling efficiency.

Q: Is EVPN-VXLAN overkill for small facilities?

If you’re under 200 racks, it may be. Stick to simpler VLAN + routed leaf designs unless you need multi-tenant isolation.

Q: Should PoE lighting be on the same network as IT systems?

No — always segment for security and reliability.