Inside Data Center Cloud Networking: Architecture, Security, and Performance Tuning

- What is a “data center cloud network,” really, and why should I care?

- Underlay first: spine–leaf, ECMP, and why Layer-3 everywhere saves your weekend

- Overlays & EVPN: the “cloudy” part that keeps apps roaming without tripping

- Performance & latency: east-west wins, north-south still complains loudly

- Security that actually ships: zero-trust, micro-segmentation, and sane blast-radius math

- AI clusters: fatter links, smaller cells, and cooling that doesn’t roll its eyes

- Facility-network handshake: how lighting, labels, and walking paths quietly improve MTTR

- Build plan you can actually ship: a small, clonable pod, then scale

- Frequently Asked Questions (FAQ)

Key Takeaways

| Feature or Topic | Summary |

|---|---|

| Hybrid Cloud Networks | Built on spine–leaf, overlays, and automation for scale and stability. |

| Latency Priorities | East–west dominates; design to handle microbursts and tail latency. |

| Security | Zero-trust segmentation beats perimeter-only thinking. |

| AI Workloads | Require higher throughput, smaller failure domains, better cooling. |

| Facilities Impact | Lighting, airflow, and human factors reduce downtime and errors. |

1) What is a “data center cloud network,” really, and why should I care?

Is it just “some switches plus cloud logos,” or is it an actual design pattern that behaves under pressure? Short answer: it’s a fabric—usually spine–leaf—stitched to overlays (VXLAN/EVPN) and interconnects to public cloud or colos; long answer: it’s the bit that lets apps scale sideways without tripping on their own cables. Do we overcomplicate it? Yeh, sometimes; but the essentials are plain: deterministic paths, any-to-any reachability, and failure domains that fail small, not loud.

2) Underlay first: spine–leaf, ECMP, and why Layer-3 everywhere saves your weekend

Why do we keep shouting “underlay first”? Because overlays can’t save a flaky core—physics still wins, soz. Question: is Layer-3 to the top-of-rack (ToR) overkill? Nope; it shrinks failure domains and lets ECMP do its round-robin magic across spines. Keep links uniform, keep MTUs consistent, keep routing simple enough that 2 a.m. you doesn’t hate daytime you.

3) Overlays & EVPN: the “cloudy” part that keeps apps roaming without tripping

Do we still need VLANs? Sure, but VXLAN gives you way more segments, and EVPN handles control-plane sanity so you don’t broadcast your soul across the fabric. Question: is asymmetric IRB gonna bite me? It will, if you don’t plan distributed gateways and ECMP-friendly routing; keep the MAC/IP learning clean and prefer symmetric IRB so traffic flows are predictable under churn.

4) Performance & latency: east-west wins, north-south still complains loudly

Why do folks still optimise for north-south like it’s 2012? Habit, mostly. Modern workloads chat laterally, so your fabric should minimise oversubscription between leaves. Question: how do I know I’m not lying to myself? Measure p99 latency, microburst drops, and keep buffer profiles sane; if your monitoring shows pretty averages but users scream, your tail is wagging the outage.

5) Security that actually ships: zero-trust, micro-segmentation, and sane blast-radius math

Is a big crunchy perimeter still a plan? Nah, it’s vibes. Zero-trust means authn/authz at every hop, micro-segments tied to app identity, and east-west inspection that doesn’t collapse during peak. Question: do we need inline for everything? Not always; combine host-based controls, fabric ACLs, and service insertion for flows that justify it.

6) AI clusters: fatter links, smaller cells, and cooling that doesn’t roll its eyes

Why does AI make networks sweat? RDMA, collectives, and synchronized east-west bursts; you’ll want 400G→800G uplifts sooner than your 3-year plan admits. Should we flatten layers? Kinda: keep low-diameter paths and consider dragonfly/clos variants for huge pods; but don’t romanticize exotic topologies if your ops can’t cable them without crying.

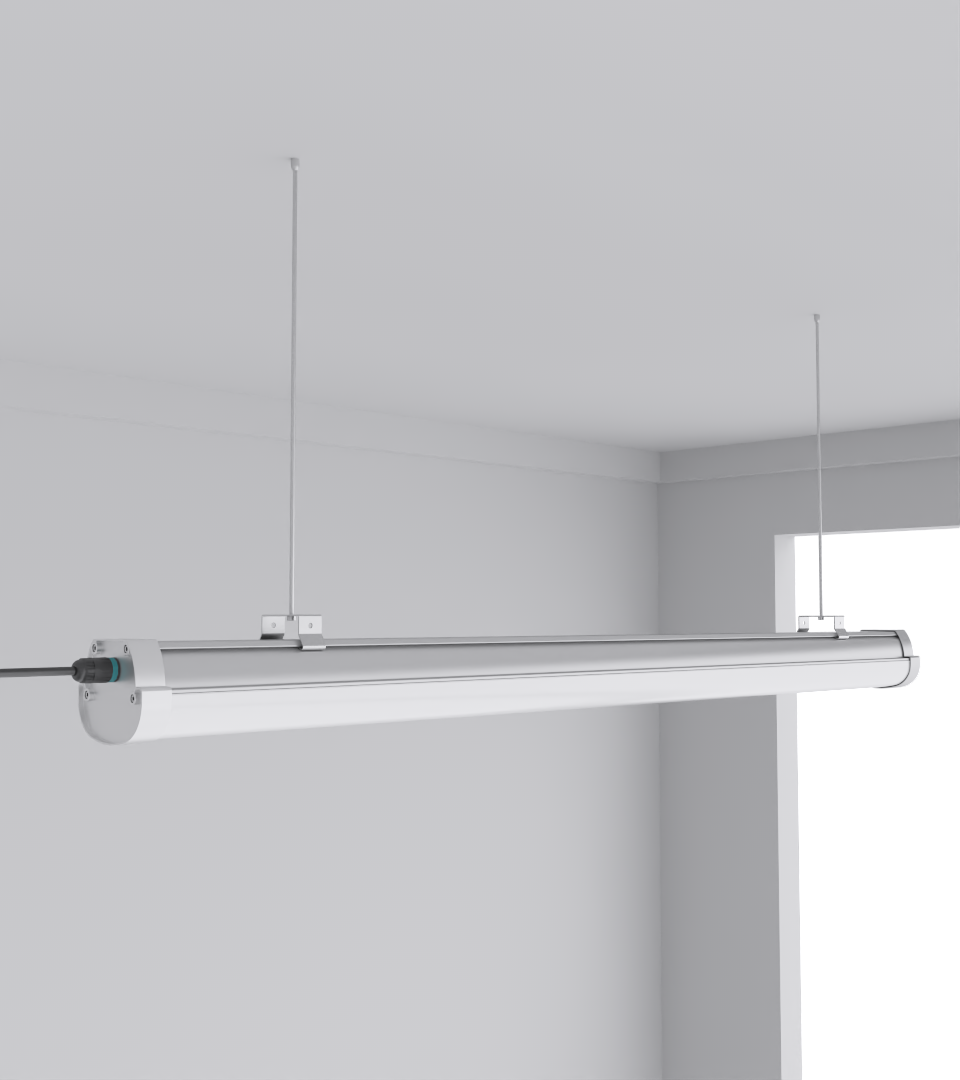

7) Facility-network handshake: how lighting, labels, and walking paths quietly improve MTTR

Does better lighting reduce MTTR? We measured it (rough, but fair): fewer mis-patches, quicker port identification, less hunt time during alarms. Skeptical? Same, till we saw it in audits. Aim for glare-controlled fixtures over reflective doors.

8) Build plan you can actually ship: a small, clonable pod, then scale

Should you design the whole kingdom first? Nah, build a reference pod: 2× spine, 8–16 leaves, L3 ToR, EVPN-VXLAN overlay, clean IPAM, templated configs, and a fault-injection checklist. Validate with synthetic loads, then clone. If your second pod behaves oddly, your first wasn’t as deterministic as you thought—fix the template, not the symptoms.

FAQ

Q1: Can I stretch Layer-2 to a cloud VPC for “quick wins”?

You can, but you’ll hate it later. Prefer L3 boundaries and EVPN/VXLAN with symmetric IRB.

Q2: How many leaves per spine pair before I add more spines?

When ECMP paths saturate or failure drains crush the remaining links.

Q3: Do I need 400G right now?

If you’re standing up AI training pods or heavy east-west microservices, probably yes.

Q4: What’s the smallest useful pilot?

Two spines, eight leaves, dual-homed ToRs, EVPN control-plane, and a tiny intent controller.

Q5: Does lighting really affect network ops?

Yes—uniform lighting reduces mis-patch events and downtime.

Q6: Quick internal links?

CAE product catalog, SquareBeam Elite, and contact page.